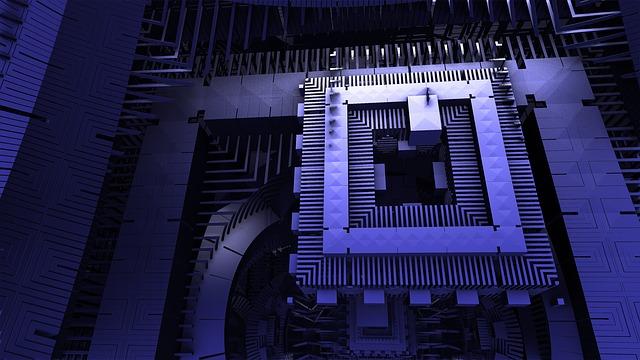

A new kind of scientific computation called exascale computing has recently been introduced in the computing industry.

Exascale computing and

quantum computing are the big things that will change the dynamics of large data processing.

Exascale computing is aimed to challenge the limitations that currently surround the traditional forms of scientific calculations and computing.

What is Exascale computing?

Exascale computing can be defined in layman’s terms as a computing system that is capable of making one quintillion (exaFLOP) in one second.

Exascale computers refer to single-core scientific computers capable of computing at a rate of at least 10⁹⁰ floating-point operations per unit second.

Exascale computers can process a large volume of data and make inferences within seconds. It has therefore given a new dimension to scientific computation.

To know more about this new form of scientific computation, it is important to have a clear idea of its benefits and challenges.

Advantages of Exascale computing

With the help of the Exascale Computing System, researchers and programmers can be assured of a much faster computation and much higher accuracy in the processes.

It would also be much cheaper compared to other forms of scientific research.

With the use of these kinds of systems, it is possible to crunch numbers much faster, as well as in a much more accurate manner.

Researchers and programmers can be confident that these applications would work much better than those made using older and more traditional methods of scientific computations.

Disadvantages of Exascale computing

There are some challenges of exascale computing technology. These issues are not in the use of exascale computers but rather in the design of this initiative.

- Exascale computers are Complex in design

The key challenge for exascale systems lies in being able to create as much data as possible with the same amount of energy as is needed for the execution of a specific scientific calculation or application.

Exascale systems, therefore, require advanced instructions for programming the cores of the machines.

Thus, developing these kinds of programs is a challenge for scientists and programmers alike.

- Exascale computers require a large data set

The development of these kinds of applications requires large amounts of scientific data.

- It is difficult to store large amounts of data

Data storage needs a lot of memory capacity for each piece of data.

In essence, the greater the memory capacity, the larger the storage space needed. Thus, developing the right application can also be a challenge.

- High processing power is required

Another challenge is in the form of the application’s ability to process large amounts of data.

In essence, the greater the processing power of the machine, the faster the data will be processed.

And thus, developing the right application becomes all the more important for scientists and programmers to get this kind of processing power working for their scientific computations.

- The inaccuracy of data as a result of the complexity

Apart from storage and processing power, another big challenge that lies ahead is in the form of designing software that can execute scientific algorithms for applications.

Fortunately, there are organizations like the Massachusetts Institute of Technology (MIT) that have already made significant advancements in designing scientific software applications that can take care of these exascale challenges, and enable proper use of this technology.

One such example is the Exascale Accelerator. Built at MIT, the Exascale Accelerator allows users to perform thousands of calculations per second without experiencing any loss in accuracy.

Because of this, the calculations can run simultaneously on different cores, thereby ensuring that there is never any loss of accuracy in running the calculations.